AI Didn't Arrive Overnight. I Was Reading About It in 1997

The narrative that artificial intelligence has suddenly burst into our lives is understandable, but it isn't true. Those of us who have worked in technology for decades have watched this moment being built, slowly and steadily, for thirty years.

The Overnight Myth

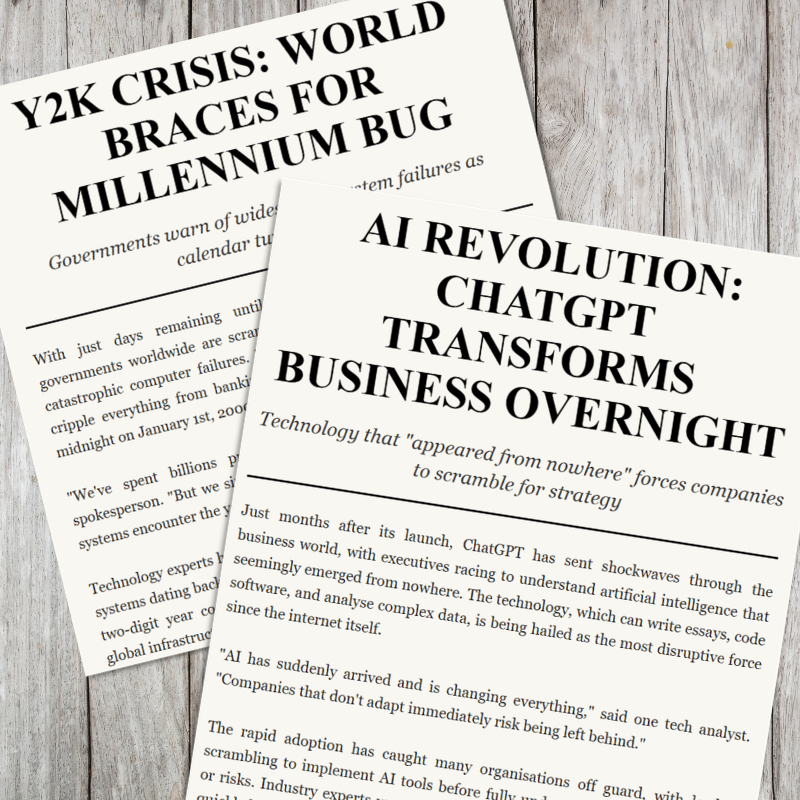

Scroll through almost any business publication today and you will encounter some version of the same sentence: "AI has suddenly arrived and is changing everything." I understand why that framing exists. For most people, the moment ChatGPT appeared in their browser in late 2022 felt like a rupture, a technology that seemed to materialise from nowhere and demand immediate attention.

But here is the thing: it didn't come from nowhere. And for those of us who have spent our careers in technology, this moment, as remarkable as it is, is the culmination of something we have been watching build for a very long time.

I know this, because I was reading about neural networks, natural language processing, and language models in 1997.

A Book, a Cultural Archive, and a Class Prize

I was a computer science student in India in the late 1990s, at a time when the internet was a novelty and most of the world's attention in the technology sector was consumed by a single, looming problem: Y2K. The millennium bug dominated boardrooms, government briefings, and column inches. Would our systems survive the turn of the century? That was the question everyone was asking.

And yet, quietly and without fanfare, something far more consequential was being worked out in university labs and academic texts.

I came across a weighty AI textbook not in a university library or a technology bookshop, but in the reading room of the Indira Gandhi National Centre for the Arts in New Delhi. They had a small but quietly impressive technical stack, and this particular volume caught my eye: a comprehensive treatment of artificial intelligence covering neural networks, machine learning, natural language processing, and the architecture of intelligent agents.

I read it from cover to cover.

I was fascinated. It laid out the very ideas that underpin what we now call modern AI: how machines could learn from data, recognise patterns in language, and improve their own performance over time. These were not science fiction concepts. They were mathematical and computational frameworks, being refined and debated by serious researchers.

For one of my university assignments, we were asked to present on something we found genuinely exciting, something we believed had a future. I chose artificial intelligence. I used that book to build my presentation, stood up in front of my peers, and made the case that AI, not just rule-based systems but machines that could genuinely learn, was going to reshape how we live and work. My classmates were interested. My lecturers were impressed. The work was awarded the top mark in the assignment.

That was 1997. Nearly three decades before "AI" became a boardroom buzzword.

Progress Doesn't Pause for the Headlines

There is a pattern in how new technologies are perceived. When they finally break through to mainstream consciousness, they appear sudden, as if they emerged fully formed overnight. But technology rarely works that way. What looks like a rupture is almost always the visible tip of decades of incremental progress.

While the world was fixated on Y2K, researchers were making foundational advances in machine learning. Through the 2000s, the rise of the internet produced the vast datasets that AI systems needed to learn from. In the 2010s, increases in computing power, particularly through graphics processors originally designed for gaming, unlocked the ability to train neural networks at a scale that had previously been impossible. The transformer architecture, published in a landmark 2017 paper, provided the technical backbone for the large language models we use today.

None of this happened suddenly. Every step was built on the last. The ideas I encountered in that 1997 textbook, the neural networks, the language models, the learning agents, are not historical curiosities. They are the direct ancestors of the AI tools now sitting on every business leader's desk.

Why This Matters for Organisations Today

I am not telling this story to be nostalgic. I am telling it because the "AI arrived suddenly" narrative has a real and damaging consequence: it encourages panic and short-term thinking.

When leaders believe a technology has emerged from nowhere, they reach for quick fixes. They adopt tools before they understand them. They chase the headline rather than building a thoughtful strategy. They treat AI as something to be bolted on to their existing operations rather than something to be understood, governed, and deployed responsibly.

But AI is not magic, and it is not a crisis. It is a maturing set of technologies with a long history, genuine strengths, real limitations, and significant ethical considerations that have been discussed in academic and policy circles for decades. The organisations that will navigate this well are not the ones who move fastest. They are the ones who move most thoughtfully.

That means understanding what these tools actually do, and what they don't. It means asking hard questions about data, bias, accountability, and the human impact of automation. It means having governance in place before you deploy, not after. And it means working with people who have spent years, in some cases decades, in this space.

Thirty Years On

I have now spent over thirty years working in technology, across IT consulting, digital transformation, systems optimisation, and responsible AI adoption. I have watched AI move from the pages of academic textbooks to the centre of global economic policy. I have advised boards, coached teams, and helped organisations translate complex AI concepts into practical workflows that actually improve how people work.

What I know from that vantage point is this: the technology is real, the potential is significant, and the risks are manageable, but only if you approach them seriously. The worst thing you can do is treat AI as a novelty that appeared last Tuesday and demands an immediate response. The best thing you can do is slow down enough to understand what you are actually dealing with.

The foundations of today's AI were laid long before it became a household name. The conversations about ethics, governance, and responsible deployment have been happening for just as long. The question is not whether your organisation needs to engage with AI. It almost certainly does. The question is whether you are going to engage with it wisely.

That, I would argue, is the work worth doing.

If you’d like to learn more about using AI in your organisation, book a free 15 min needs assesment call with Arshad below!

Free 15 min needs assessment call for Tech Optimisation coaching with Arshad, FRSA in areas such as:

Utilising Ai in your organsiation

Optimising tech systems

Following the free call, you will be invited to book a 1-hour session for £100.